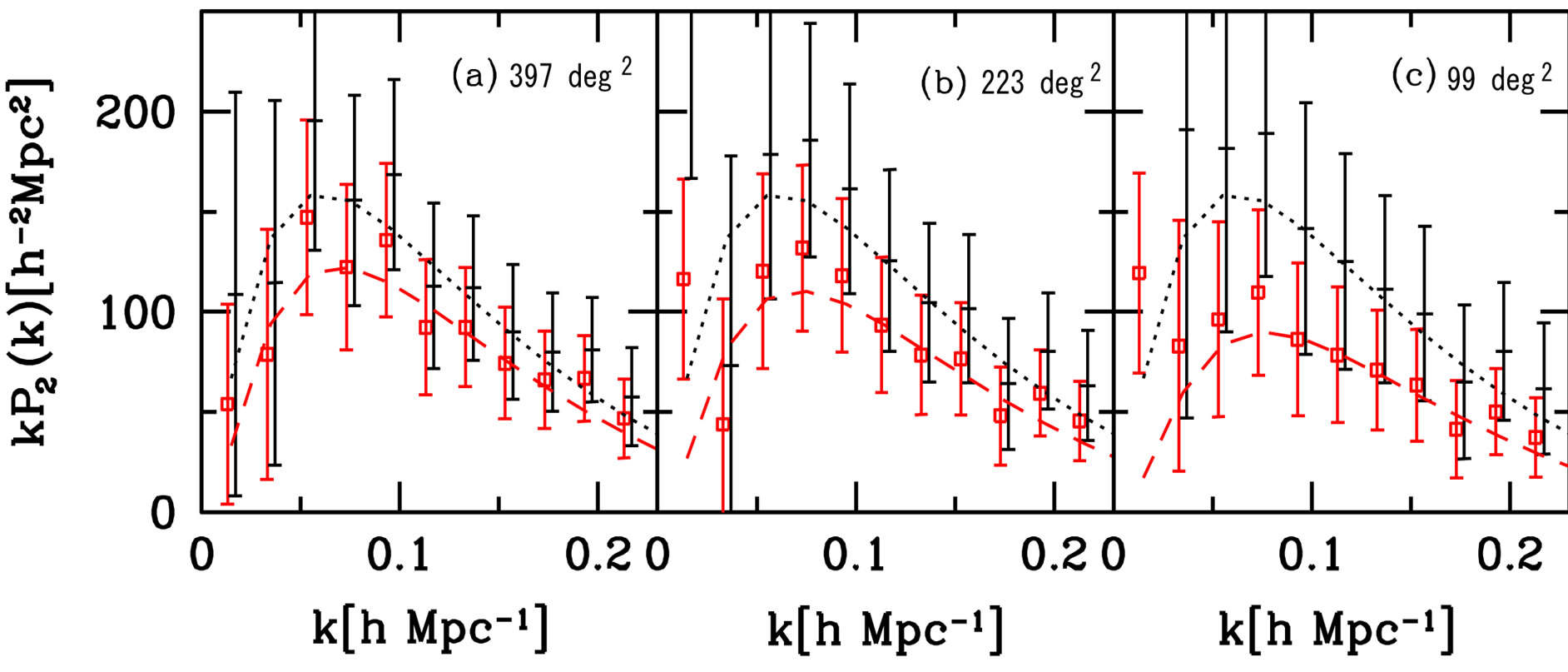

The latter method is based on a spherical harmonic decomposition of the projected density in redshift shells, as shown in Heavens & Taylor ( 1995) (for recent applications, see Montanari & Durrer 2012, 2015 Bonaldi et al. To formalize the theoretical power spectrum, the most commonly employed methods are the following two: The first uses real space coordinates, whereas the second expresses the power spectrum in angular coordinates and redshift space, so that it is directly related to the observable angular correlation function. Given the measured power spectrum, a maximum likelihood analysis yields then the best estimate of the parameters, characterizing the theoretical power spectrum, and the first attempts to apply this method at linear scales were made by Fisher, Scharf & Lahav ( 1994) and Heavens & Taylor ( 1995). In 1994, Feldman, Kaiser & Peacock ( 1994) provided an estimate of the power spectrum and, in a following pioneering paper, Tegmark ( 1997a) introduced an optimal method for estimating the power spectrum based on Bayesian statistics and the Fisher matrix formalism (Fisher 1935). Redshift window functions full#In Baumgart & Fry ( 1991), the result was generalized for redshift surveys and full three-dimensional (3D) galaxy positions. The first attempt to characterize large-scale structure and measure the power spectrum from data was presented in Yu & Peebles ( 1969) and Groth & Peebles ( 1977).

The data will then be combined with the cosmic microwave background, in particular, with the Planck satellite (Planck Collaboration XIII 2016). The data will be mainly encoded in 3D or angular power spectra and in higher order moments, since these descriptors are usually the direct outcome of cosmological theories. The data will allow us to study how the observed clustering of galaxies evolves over time, and how the gravitational lensing is generated by large-scale structures in the Universe. 2015) will provide huge data sets containing information on galaxy positions at high redshift. 2002), LSST (LSST Science Collaboration et al.

2008), eBOSS (eBOSS Collaboration 2016), BigBOSS (Schlegel et al. 2011), DESI (DESI Collaboration 2016), HETDEX (Hill et al. In a few years, large-scale surveys like Euclid (Laureijs et al. From a theoretical perspective, it is crucial to distinguish between different models and determine which of them provide the closest approximations to the observed data. Different models have been introduced, which rely on cosmological parameters like the Hubble constant, the primordial scalar spectral index, and matter abundances. Methods: statistical, surveys, galaxies: statistics, cosmological parameters, large-scale structure of Universe 1 INTRODUCTIONĪn important task of cosmology is to study the composition and evolution of the Universe. The main result of this paper is that the windowing and the bin cross-correlation induce a considerable change in the forecasted errors, of the order of 10–30 per cent for most cosmological parameters, while the redshift bin uncertainty can be neglected for bins smaller than Δ z = 0.1 roughly. Redshift window functions how to#Here, we show how to take into account these effects and what the impact on forecasts of a Euclid-type experiment will be. The third effect, in contrast, is negligible for infinitely small bins.

The first two effects are negligible only in the limit of infinite surveys. Here, we improve upon the standard method by taking into account three effects: the finite window function, the correlation between redshift bins and the uncertainty on the bin redshift. Most of the forecasts for cosmological parameters in galaxy clustering studies rely on the Fisher matrix approach for large-scale experiments like DES, Euclid or SKA. The Fisher matrix is a widely used tool to forecast the performance of future experiments and approximate the likelihood of large data sets.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed